OpenAI logo is seen in this illustration taken, March 11, 2024. (REUTERS/Dado Ruvic)

(Reuters) -OpenAI has seen a number of attempts where its AI models have been used to generate fake content, including long-form articles and social media comments, aimed at influencing elections, the ChatGPT maker said in a report on Wednesday.

Cybercriminals are increasingly using AI tools, including ChatGPT, to aid in their malicious activities such as creating and debugging malware, and generating fake content for websites and social media platforms, the startup said.

So far this year it neutralized more than 20 such attempts, including a set of ChatGPT accounts in August that were used to produce articles on topics that included the U.S. elections, the company said.

It also banned a number of accounts from Rwanda in July that were used to generate comments about the elections in that country for posting on social media site X.

None of the activities that attempted to influence global elections drew viral engagement or sustainable audiences, OpenAI added.

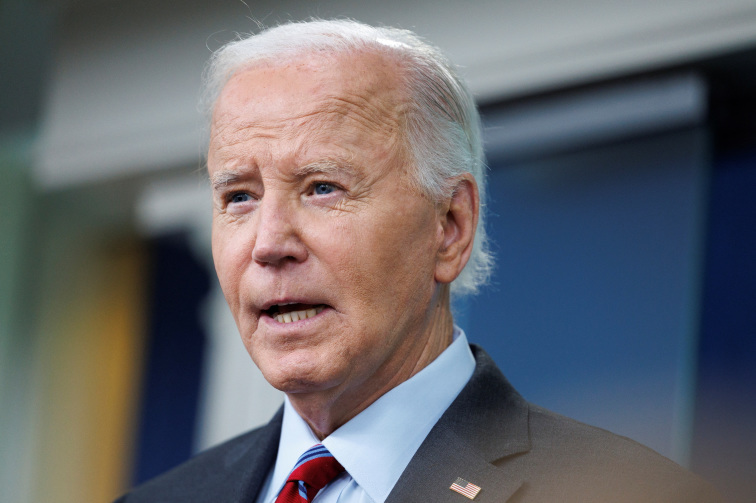

There is increasing worry about the use of AI tools and social media sites to generate and propagate fake content related to elections, especially as the U.S. gears for presidential polls.

According to the U.S. Department of Homeland Security, the U.S. sees a growing threat of Russia, Iran and China attempting to influence the Nov. 5 elections, including by using AI to disseminate fake or divisive information.

OpenAI cemented its position as one of the world's most valuable private companies last week after a $6.6 billion funding round.

ChatGPT has 250 million weekly active users since its launch in November 2022.

(Reporting by Deborah Sophia in Bengaluru; Editing by Anil D'Silva)

News magazine bootstrap themes!

I like this themes, fast loading and look profesional

Thank you Carlos!

You're welcome!

Please support me with give positive rating!

Yes Sure!